Use boring languages with LLMs

I'm Jacob - I run Sancho Studio a software consulting group, we help companies with technical leadership, strategy, and security.

I keep coming back to this idea that consistency compounds. I’ve noticed it acutely as a consultant working on multiple different projects in the last two years. Large language models amplify inconsistent technology and quietly reinforce consistent ones. The languages and ecosystems that suffer from the most fragmentation produce the worst agentic output, and the ones with the strongest conventions produce the best. I think this effect will increasingly determine which tool survives in the paradigm of massive models trained on large corpuses.

Even if code is cheap, running inference is a gamble. It’s impossible to know if at any moment the model will make a decision to install a package or produce a bizarre coding pattern from 2019. If we consider that we’re gambling with tokens, we should bet on the set of embeddings which represent strongly consistent and reinforced model weights to produce median output. For software development this is actually ideal as the median program is typically doing the basics: processing information, reading/writing files, responding to network requests, etc.

Before AI, engineers complained about languages which reinvented themselves on what felt like an annual basis. These complaints were real but mostly aesthetic and symptomatic of a frustration that humans needed to maintain or keep up with needlessly changing ecosystems.

If we look back, the 2024 State of JS survey describes a relatively fragmented ecosystem. For a human, fragmentation is annoying. For a model trained on the public corpus of all of it; fragmentation is something closer to a problem which needs to be solved in reinforcement learning or agent harnesses (e.g. Claude Code leaked and showed us that Anthropic hard-coded some bias for JavaScript frameworks).

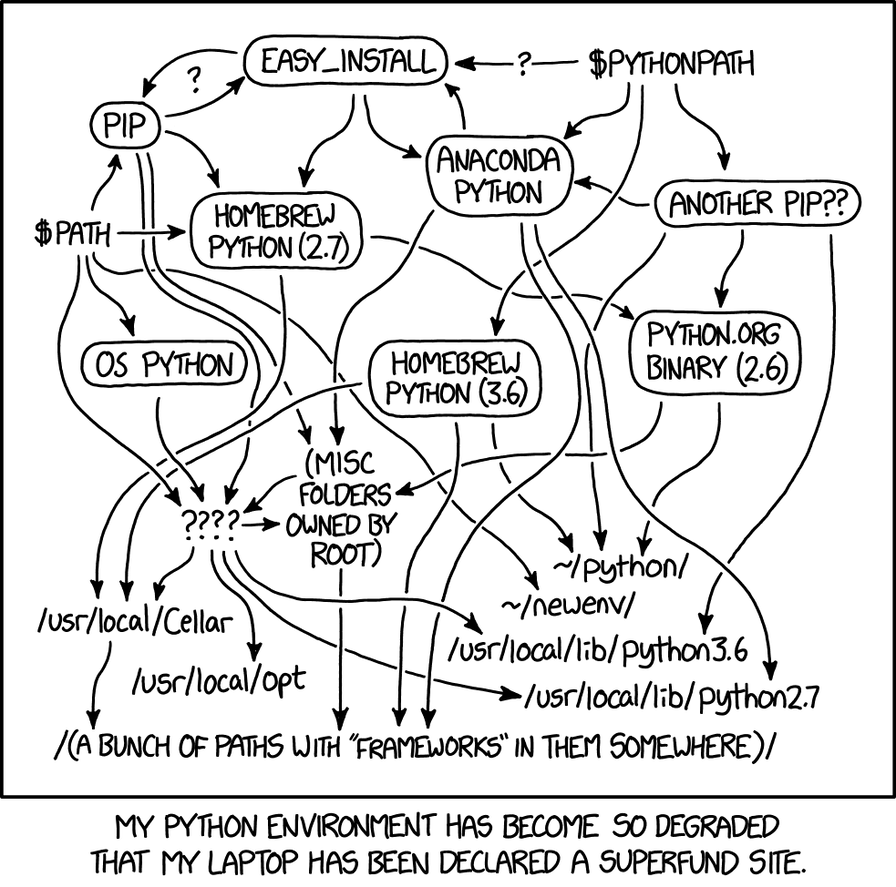

Python is the same story but sung in a different key. Asking a simple question like “which package manager are you using?” produces a matrix of language versions, package manager version, and OS compatibility which I find to be completely mind-numbing as a technical lead.

Should one use pip, poetry, or uv? Does your toolchain matter, or are you cross-compiling? How do you know if a Python package silently has a link to a C dependency? Are you using async, or have you reached for a task queue instead? Django or FastAPI?

From a model’s standpoint, there are simply too many ways to write any of this, and the corpus reflects every one of them in roughly equal weight unless recency bias is introduced at training time. What this means is straightforward to me and should be to you as well… Languages and ecosystems with low variance in their training corpus are represented better and executed more reliably by coding agents. In higher dimension vector spaces, cosine similarity in the training data is the substrate on which the model’s attention and MLP layers learn to predict the next token. A consistent corpus produces consistent inference tokens.

This pattern holds in other languages. Inference on Rails projects produces more consistent output compared to generic JavaScript backends in agentic work, not because Ruby is a better language in some Platonic sense, but because there is essentially one Rails. There are at least a dozen production-grade JavaScript frameworks offering different Venn diagrams over the same default features. Convention over configuration was a win for human programmers because flexibility is overrated and constraints are liberating. Twenty years later the same is true for machines, and arguably more so. The model just is solving for which outcome is most likely…

Go embodies this principle best and almost by accident. For years Golang resisted the conveniences and higher-level expressions that programmers kept demanding (generics in particular) and the resistance was deeply unpopular among working developers. I was one of those developers; I have written hundreds of thousands of lines of Go, and as a programmer I found the language often infuriating.

I know some of the people on the Golang team and admire their headstrong commitment to the future of programs. I have come to think Google produced, mostly inadvertently, the best language in the world for this moment. Out of the box, Go gives an agent a set of advantages no other mainstream language provides in the same combination.

The concurrency model is the first of these. Goroutines are a far more tractable primitive for coding agents than threads, callbacks, async/await, or any of the colored-function regimes that dominate elsewhere. They are simple, type-safe, and ubiquitously used in the corpus the model was trained on. There is no question of what color your function is, because the question does not exist.

results := make(chan string, len(urls))

for _, u := range urls {

go func(u string) {

resp, err := http.Get(u)

if err != nil {

results <- err.Error()

return

}

defer resp.Body.Close()

results <- resp.Status

}(u)

}

for range urls {

fmt.Println(<-results)

}The standard library is the second. net/http alone runs a non-trivial fraction of the internet’s microservices, and the cryptography packages (funded and maintained by Google) are world-class. I have shipped production systems at Zoom and Keybase that depended on these defaults and never once needed to reach outside them.

package main

import (

"fmt"

"net/http"

)

func main() {

http.HandleFunc("/healthz", func(w http.ResponseWriter, r *http.Request) {

fmt.Fprintln(w, "ok")

})

http.ListenAndServe(":8080", nil)

}The toolchain is the third, and possibly the most underrated. Go has, by design, one right way to do most things: gofmt, go vet, and now go fix enforce a single canonical style with no negotiation. The best possible combination for a language model is a consistent corpus to train on, plus one-right-way tooling for testing and feedback at runtime. gopls is a fantastic guardrail for agents because it provides real-time semantic feedback, and golangci-lint lets you statically enforce coding styles and primitives without having to prompt the agent into compliance.

$ go vet ./...

./user.go:22:2: result of fmt.Errorf call not used

./user.go:38:9: declaration of "err" shadows declaration at line 34

$ golangci-lint run

user.go:51:6: exported func LoadUser should have comment (revive)

user.go:63:3: if block ends with return, drop this else (golint)The fourth advantage is performance with garbage collection. Language models are inconsistent in managing memory; this is a well-documented limitation, and not one that is going away soon. Rust enforces memory safety at the type and borrow-checking level, which is excellent for humans and a constant fight for agents. Writing C or C++ with a coding agent is harder still, because the training data is full of memory bugs, use-after-free errors, and decades of hard-won mistakes that the model is just as capable of reproducing as it is of avoiding. Go gives you native-like performance without asking the agent to manage memory directly.

func parseLines(r io.Reader) []string {

var out []string

s := bufio.NewScanner(r)

for s.Scan() {

out = append(out, s.Text())

}

return out

}The fifth is the small, known set of footguns. nil pointers can be difficult to trace down in production stack traces for human engineers, given the right tools agents are surprisingly good at it. The set of things that can go wrong in idiomatic Go is bounded in a way that the set of things that can go wrong in, say, Python with arbitrary metaclasses simply is not.

data, err := os.ReadFile(path)

if err != nil {

return fmt.Errorf("read config %q: %w", path, err)

}Reading this, the right reaction is not “Go is the best language” but something more specific. This language and toolchain can write the majority of non-visual software a working engineer would want. Consider using Go with an agent to build the next CLI, backend server, agent orchestrator.

If any of this resonated with you, get in touch If you’re looking for a technical lead to help your team deliver consistently